Data and Machine Learning Framework

A Strategic Approach to Supervising and Assessing AI Capabilities

Introduction

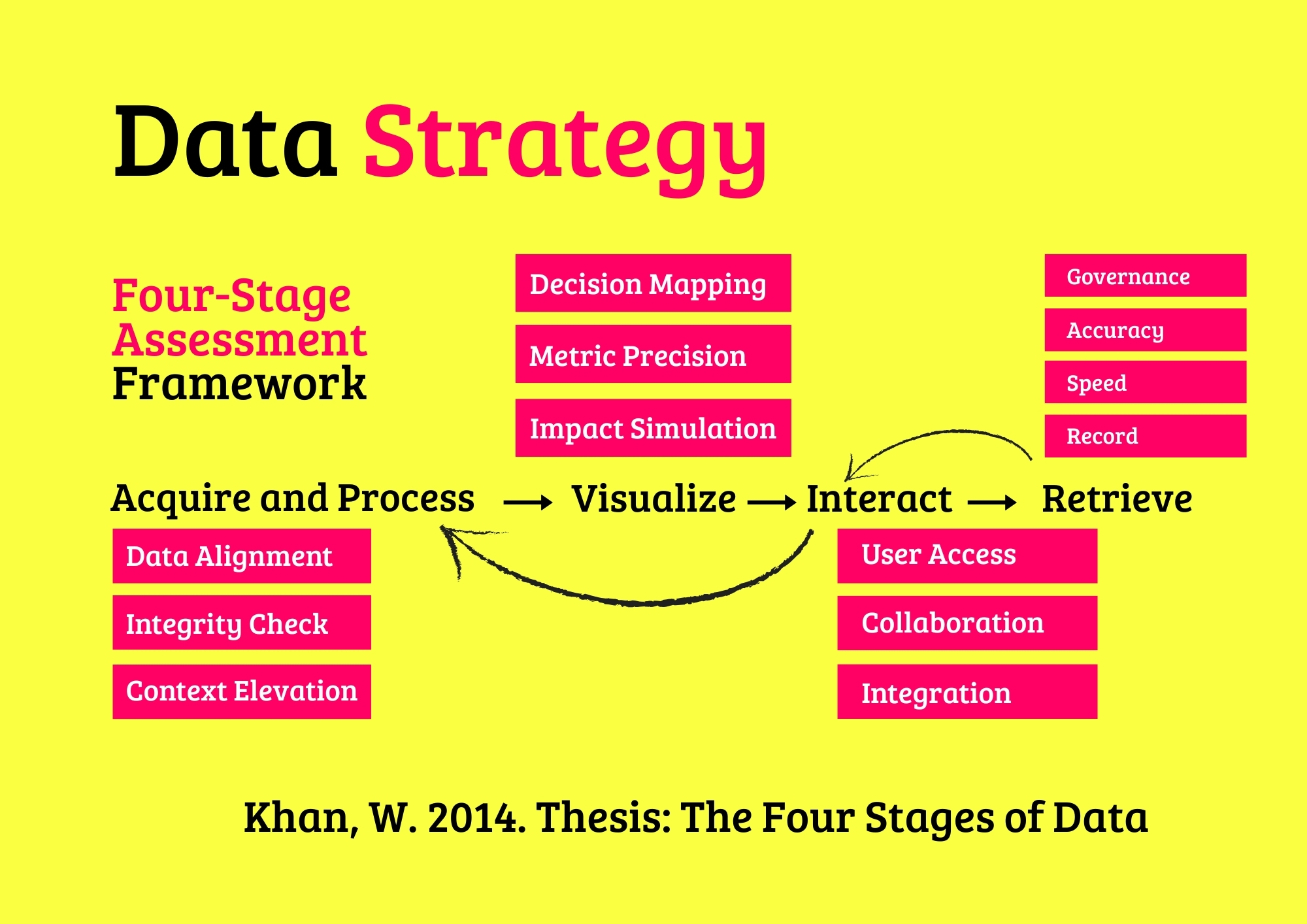

In an era where data fuels innovation, machine learning (ML) transforms raw information into predictive and actionable intelligence, driving organizational success across industries. The Data and Machine Learning Framework provides a structured methodology for supervising and assessing ML ecosystems, ensuring models are accurate, ethical, and scalable. Anchored in our core Four-Stage Platform—Acquire and Process, Visualize, Interact, and Retrieve—this framework enables organizations to build AI solutions that balance technical excellence with responsible governance.

Tailored for entities ranging from startups to global institutions, the framework integrates principles from data science, statistical modeling, and ethical AI standards like IEEE’s Ethically Aligned Design and ISO 38507. By addressing model performance, ethical integrity, scalability, and innovation, it empowers organizations to deliver AI-driven outcomes that foster trust, mitigate risks, and align with sustainability goals.

Whether a small business personalizing customer experiences, a medium-sized firm optimizing operations, a large corporate scaling AI globally, or a public entity enhancing civic services, this framework paves the way for ML mastery.

Theoretical Context: The Four-Stage Platform

Structuring Machine Learning for Supervision and Assessment

The Four-Stage Platform—(i) Acquire and Process, (ii) Visualize, (iii) Interact, and (iv) Retrieve—offers a disciplined lens for managing ML lifecycles. Informed by computational theory, decision science, and responsible AI principles, this framework emphasizes iterative supervision to ensure model reliability and fairness. Each stage is evaluated through sub-layers addressing technical accuracy, ethical compliance, operational efficiency, and innovation.

The framework incorporates approximately 40 ML practices across categories—Data Preparation, Model Development, Validation Techniques, and Operational Integration—providing a comprehensive approach to diverse needs, from predictive analytics to generative AI. This structured methodology enables organizations to navigate ML complexities, ensuring solutions are robust, inclusive, and aligned with global ethical standards.

Core Machine Learning Practices

ML practices are categorized by their objectives, enabling precise supervision. The four categories—Data Preparation, Model Development, Validation Techniques, and Operational Integration—encompass 40 practices, each tailored to specific AI needs. Below, the categories and practices are outlined, supported by applications from data science and AI governance.

1. Data Preparation

Data Preparation practices ensure high-quality inputs, grounded in data engineering, critical for model accuracy.

- 1. Data Cleaning: Removes errors (e.g., missing values).

- 2. Feature Engineering: Creates predictors (e.g., ratios).

- 3. Normalization: Scales data (e.g., z-scores).

- 4. Encoding: Converts categories (e.g., one-hot).

- 5. Sampling: Balances datasets (e.g., SMOTE).

- 6. Dimensionality Reduction: Simplifies features (e.g., PCA).

- 7. Data Augmentation: Enhances datasets (e.g., image flips).

- 8. Outlier Removal: Filters anomalies.

- 9. Temporal Alignment: Syncs time series.

- 10. Metadata Tagging: Tracks lineage.

2. Model Development

Model Development practices build robust algorithms, leveraging statistical learning, essential for predictive power.

- 11. Linear Models: Fits simple trends (e.g., regression).

- 12. Decision Trees: Maps decisions (e.g., churn).

- 13. Random Forests: Boosts accuracy (e.g., ensembles).

- 14. Gradient Boosting: Refines predictions (e.g., XGBoost).

- 15. Neural Networks: Captures complexity (e.g., CNNs).

- 16. Clustering: Groups data (e.g., k-means).

- 17. Bayesian Models: Incorporates priors.

- 18. NLP Models: Processes text (e.g., BERT).

- 19. Time Series Models: Forecasts trends (e.g., ARIMA).

- 20. Reinforcement Learning: Optimizes actions.

3. Validation Techniques

Validation Techniques practices ensure model reliability, rooted in statistical rigor, vital for trust.

- 21. Cross-Validation: Tests robustness (e.g., k-fold).

- 22. Hyperparameter Tuning: Optimizes settings (e.g., grid search).

- 23. Bias Detection: Flags unfairness (e.g., fairness metrics).

- 24. Overfitting Checks: Prevents memorization.

- 25. Confusion Matrices: Evaluates classification.

- 26. ROC Curves: Measures performance.

- 27. Residual Analysis: Checks errors.

- 28. Explainability Tools: Clarifies decisions (e.g., SHAP).

- 29. Stress Testing: Simulates extremes.

- 30. A/B Testing: Compares models.

4. Operational Integration

Operational Integration practices embed models in workflows, grounded in MLOps, key for scalability.

- 31. Model Deployment: Launches APIs (e.g., Flask).

- 32. Containerization: Packages models (e.g., Docker).

- 33. Monitoring Drift: Tracks shifts (e.g., feature drift).

- 34. AutoML Pipelines: Automates workflows.

- 35. Versioning: Manages updates (e.g., MLflow).

- 36. Scalability Testing: Ensures growth.

- 37. Rollback Systems: Reverts failures.

- 38. Compliance Audits: Meets ethical standards.

- 39. User Training: Educates stakeholders.

- 40. Feedback Loops: Refines models.

The Data and Machine Learning Framework

The framework leverages the Four-Stage Platform to assess ML strategies through four dimensions—Acquire and Process, Visualize, Interact, and Retrieve—ensuring alignment with technical, ethical, and operational imperatives.

(I). Acquire and Process

Acquire and Process builds reliable foundations. Sub-layers include:

(I.1) Data Readiness

- (I.1.1.) - Quality: Ensures clean data.

- (I.1.2.) - Relevance: Aligns with goals.

- (I.1.3.) - Ethics: Mitigates bias risks.

- (I.1.4.) - Innovation: Uses augmentation.

- (I.1.5.) - Scalability: Handles large datasets.

(I.2) Model Design

- (I.2.1.) - Accuracy: Optimizes performance.

- (I.2.2.) - Simplicity: Balances complexity.

- (I.2.3.) - Fairness: Ensures equity.

- (I.2.4.) - Innovation: Tests new algorithms.

- (I.2.5.) - Sustainability: Minimizes compute.

(I.3) Feature Engineering

- (I.3.1.) - Utility: Enhances predictors.

- (I.3.2.) - Efficiency: Speeds processing.

- (I.3.3.) - Transparency: Tracks features.

- (I.3.4.) - Inclusivity: Reflects diversity.

- (I.3.5.) - Innovation: Uses auto-encoders.

(II). Visualize

Visualize ensures model reliability, with sub-layers:

(II.1) Performance Evaluation

- (II.1.1.) - Accuracy: Measures precision.

- (II.1.2.) - Robustness: Tests scenarios.

- (II.1.3.) - Trust: Builds confidence.

- (II.1.4.) - Innovation: Uses ensemble validation.

- (II.1.5.) - Ethics: Checks fairness.

(II.2) Bias Mitigation

- (II.2.1.) - Fairness: Detects disparities.

- (II.2.2.) - Transparency: Explains outputs.

- (II.2.3.) - Compliance: Meets IEEE standards.

- (II.2.4.) - Innovation: Uses fairness tools.

- (II.2.5.) - Inclusivity: Represents all groups.

(II.3) Explainability

- (II.3.1.) - Clarity: Simplifies decisions.

- (II.3.2.) - Accessibility: Reaches stakeholders.

- (II.3.3.) - Trust: Enhances adoption.

- (II.3.4.) - Innovation: Uses SHAP/LIME.

- (II.3.5.) - Ethics: Ensures accountability.

(III). Interact

Interact integrates models into operations, with sub-layers:

(III.1) Deployment Stability

- (III.1.1.) - Reliability: Ensures uptime.

- (III.1.2.) - Scalability: Handles demand.

- (III.1.3.) - Efficiency: Minimizes latency.

- (III.1.4.) - Innovation: Uses serverless.

- (III.1.5.) - Sustainability: Reduces compute.

(III.2) Integration

- (III.2.1.) - Interoperability: Connects systems.

- (III.2.2.) - Automation: Streamlines pipelines.

- (III.2.3.) - Resilience: Manages failures.

- (III.2.4.) - Ethics: Ensures fair access.

- (III.2.5.) - Innovation: Uses MLOps.

(III.3) User Adoption

- (III.3.1.) - Ease: Simplifies interfaces.

- (III.3.2.) - Training: Educates users.

- (III.3.3.) - Feedback: Captures insights.

- (III.3.4.) - Inclusivity: Supports diversity.

- (III.3.5.) - Innovation: Uses dashboards.

(IV). Retrieve

Retrieve ensures long-term performance, with sub-layers:

(IV.1) Model Monitoring

- (IV.1.1.) - Drift: Tracks shifts.

- (IV.1.2.) - Accuracy: Checks performance.

- (IV.1.3.) - Reliability: Prevents failures.

- (IV.1.4.) - Innovation: Uses auto-retraining.

- (IV.1.5.) - Ethics: Flags bias.

(IV.2) Updates

- (IV.2.1.) - Timeliness: Refreshes models.

- (IV.2.2.) - Versioning: Tracks changes.

- (IV.2.3.) - Compliance: Meets regulations.

- (IV.2.4.) - Innovation: Uses CI/CD.

- (IV.2.5.) - Sustainability: Optimizes resources.

(IV.3) Governance

- (IV.3.1.) - Accountability: Assigns ownership.

- (IV.3.2.) - Transparency: Logs decisions.

- (IV.3.3.) - Ethics: Upholds fairness.

- (IV.3.4.) - Innovation: Uses blockchain audits.

- (IV.3.5.) - Inclusivity: Engages stakeholders.

Methodology

The assessment is grounded in data science and AI governance, integrating ethical and operational principles. The methodology includes:

-

Ecosystem Audit

Collect data via model reviews, logs, and stakeholder interviews. -

Performance Evaluation

Assess accuracy, fairness, and scalability. -

Gap Analysis

Identify issues, such as bias or drift. -

Strategic Roadmap

Propose solutions, from retraining to governance. -

Iterative Supervision

Monitor and refine continuously.

Machine Learning Value Example

The framework delivers tailored outcomes:

- Startups: Launch predictive models with lean data.

- Medium Firms: Optimize operations with NLP.

- Large Corporates: Scale AI with automated pipelines.

- Public Entities: Enhance services with fair models.

Scenarios in Real-World Contexts

Small Retail Firm

A retailer seeks better predictions. The assessment reveals poor data quality (Acquire and Process: Data Readiness). Action: Clean data with SMOTE. Outcome: Forecast accuracy up 15%.

Medium Healthcare Provider

A provider aims to improve diagnostics. The assessment notes weak validation (Visualize: Bias Mitigation). Action: Use fairness metrics. Outcome: Equity improves by 12%.

Large Tech Firm

A firm needs scalable AI. The assessment flags slow deployment (Interact: Deployment Stability). Action: Use Docker. Outcome: Rollout time cut by 20%.

Public Agency

An agency wants transparent AI. The assessment identifies lax governance (Retrieve: Governance). Action: Implement audits. Outcome: Trust rises by 18%.

Get Started with Your Data and Machine Learning Assessment

The framework aligns AI with goals, ensuring precision and ethics. Key steps include:

Consultation

Explore ML needs.

Assessment

Evaluate models comprehensively.

Reporting

Receive gap analysis and roadmap.

Implementation

Execute with continuous supervision.

Contact: Email hello@caspia.co.uk or call +44 784 676 8083 to advance your AI capabilities.

We're Here to Help!

Inbox Data Insights (IDI)

Turn email chaos into intelligence. Analyze, visualize, and secure massive volumes of inbox data with Inbox Data Insights (IDI) by Caspia.

Data Security

Safeguard your data with our four-stage supervision and assessment framework, ensuring robust, compliant, and ethical security practices for resilient organizational trust and protection.

Data and Machine Learning

Harness the power of data and machine learning with our four-stage supervision and assessment framework, delivering precise, ethical, and scalable AI solutions for transformative organizational impact.

AI Data Workshops

Empower your team with hands-on AI data skills through our four-stage workshop framework, ensuring practical, scalable, and ethical AI solutions for organizational success.

Data Engineering

Architect and optimize robust data platforms with our four-stage supervision and assessment framework, ensuring scalable, secure, and efficient data ecosystems for organizational success.

Data Visualization

Harness the power of visualization charts to transform complex datasets into actionable insights, enabling evidence-based decision-making across diverse organizational contexts.

Insights and Analytics

Transform complex data into actionable insights with advanced analytics, fostering evidence-based strategies for sustainable organizational success.

Data Strategy

Elevate your organization’s potential with our AI-enhanced data advisory services, delivering tailored strategies for sustainable success.

AI Business Agents in Action

Frequently Asked Questions

What exactly is an AI Business Agent?

An AI Business Agent is a virtual employee that can talk, write and act like a human. It handles calls, chats, bookings and customer support 24/7 in your brand voice. Each agent is trained on your data, workflows and tone to deliver accurate, consistent, and human-quality interactions.

How are AI Business Agents trained for my business?

We train each agent using your documentation, product data, call transcripts and FAQs. The agent learns to recognise customer intent, follow your processes, and escalate to human staff when required. Continuous retraining keeps performance accurate and up to date.

What makes AI Business Agents better than chatbots?

Unlike traditional chatbots, AI Business Agents use advanced language models, voice technology and contextual memory. They understand full conversations, manage complex requests, and speak naturally — creating a human experience without waiting times or errors.

Can AI Business Agents integrate with our existing tools?

Yes. We connect agents to your telephony, CRM, booking system and internal databases. Platforms like Twilio, WhatsApp, HubSpot, Salesforce and Google Workspace work seamlessly, allowing agents to perform real actions such as scheduling, updating records or sending follow-up emails.

How do you monitor and maintain AI Business Agents?

Our team provides 24/7 monitoring, quality checks and live performance dashboards. We retrain agents with new data, improve tone and accuracy, and ensure uptime across all communication channels. You always have full visibility and control.

What industries can benefit from AI Business Agents?

AI Business Agents are already used in healthcare, beauty, retail, professional services, hospitality and education. They manage appointments, take orders, answer enquiries, and follow up with customers automatically — freeing staff for higher-value work.

How secure is our data when using AI Business Agents?

We apply strict data governance including encryption, access control and GDPR compliance. Each deployment runs in secure cloud environments with audit logs and permission-based data access to protect customer information.

Do you still offer data and analytics services?

Yes. Data remains the foundation of every AI Business Agent. We design strategies, pipelines and dashboards in Power BI, Tableau and Looker to measure performance and reveal new opportunities. Clean, structured data makes AI agents more intelligent and effective.

What ongoing support do you provide?

Every client receives continuous optimisation, analytics reviews and strategy sessions. We track performance, monitor response quality and introduce updates as your business evolves — ensuring your AI Business Agents stay aligned with your goals.

Can you help us combine AI with our existing team?

Absolutely. Our approach is hybrid: AI agents handle repetitive, time-sensitive tasks, while your human staff focus on relationship-building and creative work. Together they create a seamless, scalable customer experience.